In health care research and development, expert patients are playing an increasingly important role. They are shaping educational programs for physicians, nurses and patients newly diagnosed, improving treatment regimes for those with chronic disease and having an impact on research.

Increasingly, expert learners are influencing the design and development of online courses. We examine how faculty members can partner and learn with “expert learners” so as to improve the quality of their online programs and courses.

Who Are Expert Learners?

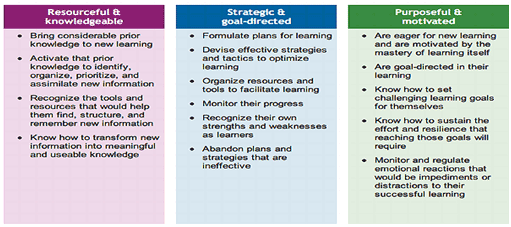

Not all who take courses are experts at the process of learning. An individual can be considered an “expert learner” if, according to recent research, they are:

- Resourceful, knowledgeable and experienced reflective learners.

- Goal directed and strategic in their use of learning resources and their learning style.

- Highly motivated, goal oriented and deliberate.

The following table puts some “flesh” on these bare bone description of the characteristics of expert learners. It is a summary of the work by the National Centre for the Universal Design for Learning, based in Wakfield, MA (see here for details) – the source for this table.

Such learners can provide valuable insights into what works, what could work and the conditions under which certain kinds of learning activities and tasks can thrive. Just as expert patients can assist the medical team in delivering quality care, so expert learners can support the drive for quality online learning.

Expert learners can be a variety of different kinds of people, not just students. They can be “peers” such as other faculty, a student enrolled or who has completed the course, a supervisor of a faculty member, an advisory committee member, a colleague from business or other organization who is expert in both the field and helping others learn, instructional designers, those who help learners all the time – counsellors and librarians, for example. The key is that they understand learning as a process.

Identifying Expert Learners

Given these characteristics, it is clear that not all learners are experts in their own learning. Those that tend to self-identify. For example, expert learners will ask questions about the process of learning, the rubrics of assessment, the purpose of activities, the value of simulations and games. They are not challenging; they want to know how best to plan and maximize the value of their time investment in learning. They also want to best “fit” the course design to their own learning style.

Faculty members can facilitate self-identification by:

- Encouraging questions about the learning process and learning design associated with their course.

- Encouraging learners to complete a learning style inventory (such as this or one of the many available here) and use the results as a basis for a conversation (in class or online) about learning styles and how they can best use the course.

- Set activities at various points in a course, which ask students to reflect on how they are learning– a change from a focus on course content.

- Create activities which require more than knowledge-repeating or knowledge-telling, but instead require the learner to engage in what is referred to as knowledge application – taking an idea and using it in an appropriate context, but one not taught in the course. Expert learners are more likely to respond to this challenge than novice learners (see here).

Once identified, expert learners can support faculty members in their development and improvement of online courses, but their expertise in learning needs encouragement and support. One major role of the faculty member is to nurture reflective learning.

Making Best Use of Expert Learners

Currently, most input from learners is secured when the course ends – student feedback. Expert learners can be most helpful before the course is developed – as part of the design team. Expert learners, even from different disciplines, can be helpful at both the design and development stages of the instructional design process.

At the design phase, they can help identify challenges learners will have with pacing and assignments, with accessing a range of different learning materials and in adapting the course experience to different learning styles.

At the development phase, they can review and test materials and activities, suggest new and different activities which may appeal and engage learners more directly, and assess the work-load implications of the course from a current learner experience point of view. In short, one or two expert learners should be regarded as part of the design team.

Piloting Course Components

Another opportunity for learners – expert or novices or both – to become involved in improving the quality of online learning, in partnership with faculty, is to test components of courses in development before they are released to the wider student community. Tests of simulations, games, complex activities or assessments can provide useful insights.

Some faculty members make use of focus groups of learners to help understand the challenges of specific courses or activities. For example, faculty who teach statistics have used focus groups to understand the range of challenges they face in a designing a course for non-mathematically inclined learners or those with an active resistance to work involving numbers, formulae and calculation. In science and engineering, focus groups have been used to help determine practical projects, which can be incorporated into courses.

Student Engagement through Challenge

A variety of programs and courses are using student challenges as project-based courses so as to strengthen both the understanding of complex ideas (e.g. synthetic biology, nanotechnology, machine intelligence, and neuroscience) and how these ideas can be applied to real world challenges.

The annual MIT synthetic biology competition (IGEM Using Standard Parts), entered by learners from high schools (30 teams), colleges and universities (215 teams) from around the world, is an example of learning by challenge (see here for details).

Such work is building on a model of adult learning, which gives emphasis to experiential learning and challenge. Leonard and DeLacey (2002) suggest that such learning has these characteristics:

- Learning is a social activity: group activities and communities aid in the effectiveness of the learning experience because of the basic nature of human beings as social creatures.

- Integrate learning into life: making connections to a learner’s work or life outside the classroom is critical because it provides a context in which the acquired knowledge can be used.

- Enable learning by doing: practice is the best way for a learner to truly gain mastery of a subject or concept.

- Encourage learning by discovery: research indicates that people retain information longer when they are given the opportunity to realize ideas and solutions from their own understanding.

- Remember that individuals have different mental receptors for material: coherence of new material somewhat depends on what a learner may already know. This can both help and hinder learning, and an instructor needs to be cognizant of this fact when delivering material.

- Make it fun: students who are engaged and involved are obviously more open to the learning experience. Fun is not just for children because a playful, non-threatening environment also helps adult students benefit from the experience.

- Build in assessment, but don't delude yourself into thinking you can measure learning: quantitative assessment becomes more difficult with increased content complexity. Also, some learning may take time to digest and is not accurately measurable within the temporal course.

The faculty member who involves his or her learners in such a challenge needs to use the experience to not just achieve the formal and informal subject matter learning outcomes, but to engage these learners in a dialogue about the nature of their learning and how they would like to learn, especially using global online learning resources.

The MIT IGEM Competition is just one such competition. Business schools have case competitions (for example, the Scotiabank Ivey Competition), there are challenges in engineering (here, for example) and psychology (here) and many other disciplines. The point is that these are both highly engaging activities, very demanding of the learners and a unique opportunity to engage learners in a conversation about how they learn and prefer to learn – a way of securing insights into what is “next” in terms of the design challenges for online learning.

This work relates to quality in online learning in two ways. First, intense student engagement in such activities can reveal a lot about how students learn and what gets in the way of their learning. Focused conversations with such learners about how they use technology to support their learning and how they would like their courses to be designed to make better use of such resources can be illuminating with regard to course design. Secondly, testing specific online supports for their learning is something that these engaged students seem ready to do.

Using Analytics to Secure Insights into Quality

In the last five years, there has been a substantial growth in the use of learning analytics – tracking of student behaviour and activities through the data collected by learning management systems. At this time, such systems can be helpful in:

- Using behavioural data, performance data and activity reporting systems to identify students “at risk” and enable early intervention (see the work at Purdue as an example).

- Most large corporations such as Pearson, Blackboard andDesire2Learn have invested heavily in analytics to capture a significant amount of data, including the time spent on a resource, frequency of posting, number of logins, participation in forums and discussions, time spent on a simulation and so on. This is not only helpful both in terms of supporting individual learners, but also in terms of assessing the veracity if a course design – what kind of use do learners make of the resources developed for them?

- Social Networks Adapting Pedagogical Practice (SNAPP), a network visualization tool developed by researchers at the University of Wollongong (Australia), can analyse students’ interactions in a forum and display it in a visualised diagram, which help faculty to identify the key connections and disconnected students and support collaborative learning in a web-based learning environment. Such data can also aid the redesign of activities and projects to foster better collaboration.

- The Khan Academy, a free website where students can access thousands of tutorial videos covering hundreds of subjects, also provides a completely online learning environment and incorporates learning analytics to enable faculty to assess progress and focus on an individual student, including progress summary, daily activity report, class goals report, progress report, student activity report, student focus report, etc. By using various analytics tools, students can review their learning progress and faculty are also supported in how to personalize learning for students in need for more help in specific areas. Similar analytic tools are being “designed in” to many learning management systems.

- The Student Experience Traffic Lighting (SETL) project at the University of Derby (UK), integrates data from different systems to provide an overview of a student’s level of engagement with the institution and is proactive in identifying students at risk of withdrawing to improve retention rates.

While not all institutions are using analytics in a systematic way at this time, they will increasingly encourage faculty to do so, given that the major learning management systems have, or will have, powerful analytics capabilities. Faculty can begin to use evidence of use and performance as a basis for course revision.

Survey Data

Currently, almost every learner completes an end-of-course evaluation, with some being more elaborate than others. Many of these are “old designs” focused on satisfaction and relevance (see here for an example), though increasingly measures of student engagement are being used (see here).

When questions are added that focus specifically on their online experience, significant insights can be gathered, which can improve the design, development and implementation of online programs and courses, as can be seen from the data gathered by BCcampus from students at a variety of institutions (here) or the national survey undertaken in Australia (here).

Conclusion

There are a variety of ways for a faculty member to improve the quality of online programs and courses that can involve learners as part of the process of continuous improvement.

The key point is just this: it is a process of continuous improvement. The expert learner, learning analytics, experiential learning involving learner reflection, survey data and other forms of input are all inputs into an ongoing process of continuous improvement, linked to the analysis – design – develop – implement – evaluate model of instructional design. Experience suggests that when learners become actively engaged in the design of their learning, many of them will improve their performance. While some just want to “get the materials, get their grades and get out”, many more want to learn and learn how to learn.

References

Leonard, Dorothy A., and Brian J. DeLacey. "Designing Hybrid Online/In-Class Learning Programs for Adults." Harvard Business School Working Paper, No. 03-036, December 2002.